The potential of street-level imagery (and deep learning) to map the world

Reliable geodata is essential for humanitarian logistics, disaster response, and infrastructure planning. Yet critical information such as road surface conditions or infrastructure accessibility is incomplete or missing in many regions of the world.

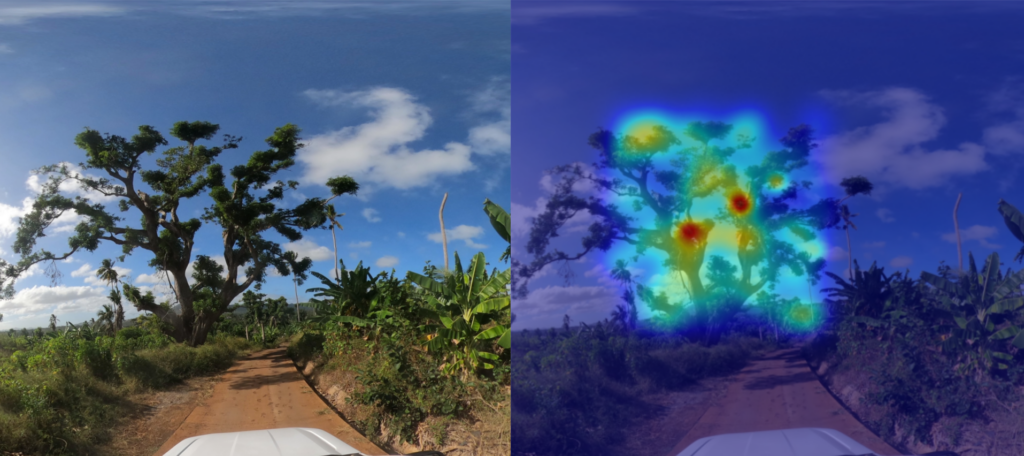

To gather the needed information, we often need a real-life, eye-level perspective. This is why street-level imagery, combined with deep learning methods, bears a great potential to close these gaps.

GeoAI research based on street-level imagery is dependent on the availability of imagery, with platforms such as Mapillary and Panoramax playing a key role in offering open, freely accessible street-level images. At the same time, we always need more low-barrier methods to collect and process new imagery in order to truly include data from all over the world.

Once we have the imagery, the next step is to develop automated pipelines that transform images into structured, georeferenced data products. Many promising methods complement street-level, satellite and Unmanned Aerial Vehicle (UAV) data to best capture infrastructure characteristics, giving DL models a comprehensive perspective.

From road classification to waste management and inclusive sidewalks

Our work with street-level imagery began with a central question: how can deep learning help fill persistent gaps in the world map?

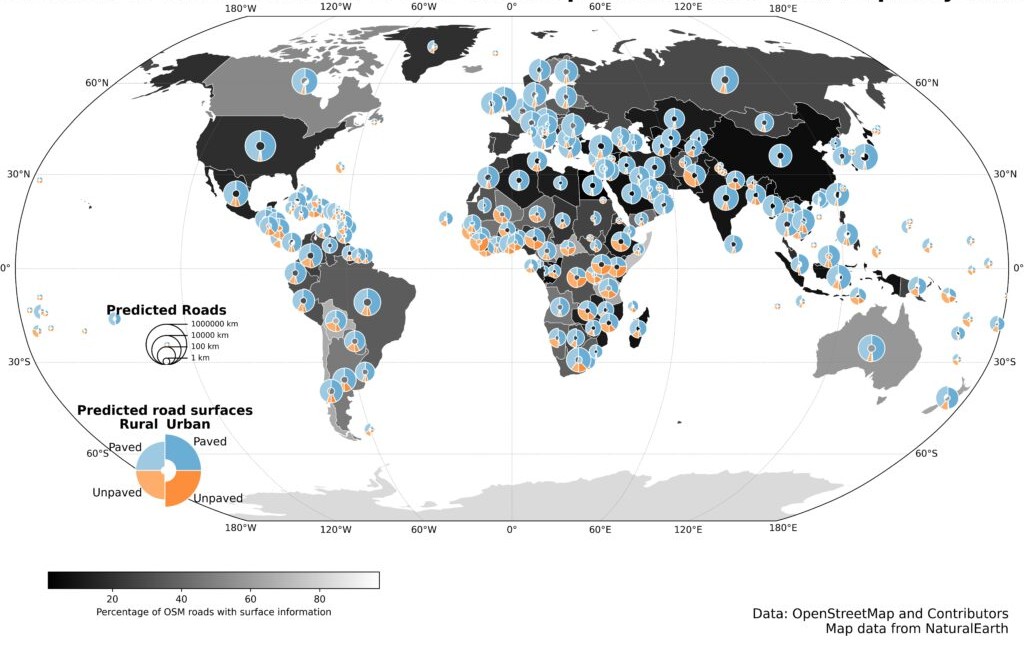

In 2024, HeiGIT released a global road surface dataset that distinguished paved and unpaved roads using state-of-the-art deep learning models trained on crowdsourced imagery. While only about one third of roads in OpenStreetMap contain road surface information, our dataset expanded global coverage to 36.7 million kilometers. A new data release last year further improved consistency and achieved 89.2% classification accuracy across 9.2 million kilometers of major roads. The updated for dataset can be used for routing, accessibility analysis, and logistics planning and is openly available via the Humanitarian Data Exchange.

This work demonstrated how image classification can systematically enhance the availability of geographic data worldwide. So we kept expanding our work in DL image classification, increasingly adopting multimodal approaches that combine street-level, aerial, and satellite imagery with other environmental data, to strengthen the robustness of the models.

With a pilot project in Dar es Salaam, we applied computer vision techniques to high-resolution street-level imagery to detect waste accumulations along the streets, supporting evidence-based decision-making in flood-prone areas. In parallel, we have also used street-level images to identify environmental features important to older adults and to design a routing system tailored to their needs. Moreover, we have been exploring how street-level images can be used to estimate the width of sidewalks, which is critical for inclusive walkability assessments. This research led to the HeiMeterStick prototype.

A weather-adaptive routing service for all road conditions

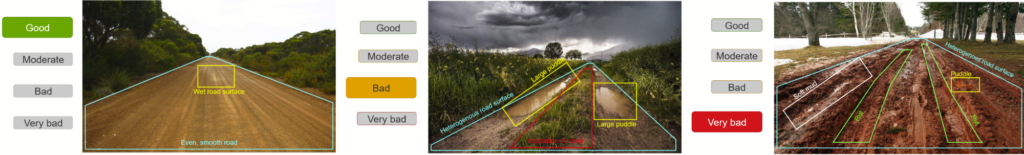

Building on our road surface dataset and previous experience in DL image classification, we have started working on the next big thing: the AI Logistic Awareness System (AILAS), a weather-adaptive routing system for regions with predominantly unpaved roads. The project addresses a critical challenge in humanitarian logistics: road passability can change within hours due to rainfall, yet up-to-date information on current road surface conditions is rarely available in remote areas.

AILAS integrates street-level imagery, deep learning classification, and environmental data within a probabilistic framework. The project goal is to support humanitarian logistics planners with reliable information on the state of roads: thanks to road surface classification combined with rainfall forecasts and soil moisture data, the system predicts how the passability of roads is likely to change in different weather conditions. These changing passability scores are to be integrated into openrouteservice for routing. This enables logistics planners to e. g. to predict that driving along a given route will likely take longer or might even be impossible after a heavy rainfall, helping them plan routes more efficiently.

How we build a street-level imagery collection

The first key challenge of the AILAS project, much before we start fine-tuning machine learning models, is collecting enough street-level imagery of roads in several weather conditions to be able to train the models, as such imagery is not widely available yet. A core principle of the AILAS project is its partner-oriented data collection approach.

The project’s pilot region is Madagascar, where we collaborate closely with the Croix-Rouge Malagasy to define needs, incorporate local practitioners´ know-how, and collect the imagery. Together with our partners, we designed a workflow that minimizes technical barriers for imagery collection. Two field teams of Croix-Rouge Malagasy have been equipped with action cameras mounted on their emergency vehicles. The drivers learn how to work with the cameras with a simple multilingual tutorial via the IFRC GIS Training Platform. They can configure the cameras in a few seconds, without other previous knowledge, by simply scanning a QR code. With this method, field teams have already captured more than 20,000 street-level images throughout Madagascar.

The collected imagery is then uploaded to a private Panoramax instance, ensuring free and transparent usage. The crowdsourced platform Panoramax is the natural solution to store and process the collected street-level imagery, as it is a free, fully open source platform with a federated architecture in which anyone can host an instance and freely optimize the pipelines to show objects, e. g. adding annotations that are relevant for humanitarian and logistics purposes. Moreover, faces and license plates are automatically blurred after the upload to Panoramax and the platform offers complete control over data storage and access policies, ensuring high privacy and data protection.

An overview of the technical architecture we developed, including a code snippet, can be found in this blog article.

Next steps

All these efforts demonstrate the potential of street-level imagery and deep learning methods to provide missing geodata where it is most urgently needed. From global road surface datasets to weather-adaptive routing and accessibility metrics, eye-level images can be a game changer in DL-based research.

This is why we are working with our partners to further expand imagery collection, refine multimodal classification models, and publish more open-access datasets with insights on street-level infrastructure that can be useful to disaster management practitioners, logistics experts, urban planners, and researchers alike.